Therac-2025

Reflections on Bugs and Doing Things Half-Assed

Mistakes are part of our lives. Not only experience confirms this, but also proverbs and folk wisdom. “Only those who do nothing make no mistakes”, “to err is human”, “only fools never make mistakes”, and so on. This makes sense, because errors are simply an unavoidable part of the learning process.

However, there are areas of human activity where the tolerance for mistakes is very low. As the saying goes, a bomb disposal technician only makes a mistake once. A surgeon can go to prison for a mistake. A judge can destroy someone’s life with a wrong decision. A programmer, on the other hand, can make mistakes freely, because bugs are treated as a natural by-product of their work. Even if the software controls critical tasks in a nuclear power plant or a ballistic missile with a nuclear warhead.

Not bad, not terrible

I am not claiming that it is possible to build perfect software: bug-free, accounting for every edge case, and resistant to cyberattacks. We operate on so many layers of abstraction built on top of the physical reality of the computer (silicon, atoms, electrons) that it would probably be impossible.

However, I do believe that:

- many bugs and vulnerabilities could be avoided if organizations implemented basic QA processes at every stage of the SDLC

- errors in software behavior and the general enshittification of software are the result of widespread and commonly accepted sloppiness in the IT industry — from the people writing the code, through managers, testers, architects, etc.

I think we all know the pattern: code written in a hurry to make the release deadline. Meaningless tests done half-heartedly just to reach the required code coverage percentage. QA departments eliminated as a cost-saving measure. Vague documentation, usually outdated anyway. System architectures detached from reality. Development of critical components outsourced to random (as long as they’re cheap!) software houses or AI agents. Doing things “good enough” just to close a task that was badly estimated during a Scrum poker session and send the invoice. Rampant agile — sloppy, without a plan, but done in sprints. A culture of misinterpreted performance — understood as delivering the goal without verifying its quality or long-term consequences.

I would like to believe it doesn’t look like this everywhere, but my own observations and conversations within the industry rather confirm that such phenomena are widespread in organizations. The acceptance of mediocrity and the high tolerance for poor workmanship in IT have a real impact on all of us. Every day we use dozens of devices that run some kind of software — from phones and smartwatches, through washing machines and refrigerators, to cars and ATMs. That’s why I claim that we all bear the consequences of poorly delivered software.

Never mind that companies spend fortunes maintaining unoptimized, illogical applications, technical debt, and patching holes in systems. Never mind that fixing bugs is often the main job of programmers. So what if some ERP system doesn’t work again, reports fail to generate, or a game crashes. These are relatively harmless consequences. Even if it’s software used for delivering chlamydia test results, I still wouldn’t consider that a truly serious consequence of poorly designed software.

But imagine that software written carelessly and without proper safety procedures is responsible for your life, health, or finances. That changes the perspective somewhat — and situations like this have already happened.

I decided to describe several spectacular bugs and bad practices in software development as a warning. They show what can happen when software is insufficiently thought out and tested. I found so much material that I had to split this article into two separate posts. One major inspiration for choosing this topic was Matt Parker’s book translated into Polish as “Pi razy oko” (Warsaw 2021), in which the author describes many software bugs from a mathematician’s perspective. I highly recommend it.

HealingKilling software

Let’s start with a heavy example. Software in the Therac-25 radiotherapy machine in the 1980s led to the deaths of at least five people by administering lethal doses of radiation. Equipment designed to heal — ended up killing.

The manufacturer of Therac-25 — Atomic Energy of Canada Limited — made several disastrous design decisions during its development. First, the device’s software and hardware were tested in isolation. The equipment was assembled entirely in hospitals, and only there were integration tests performed.

Second, the Therac-25 software was written in PDP-11 assembly and was based on legacy code from the Therac-6 and Therac-20 devices. Unlike previous versions, Therac-25 had no additional hardware safety interlocks, so protections against, for example, delivering a lethal radiation dose existed only in software. That was a fatal design decision, because it relied on the assumption that the software was written flawlessly and anticipated every edge case — which in reality rarely happens. Therac-25 failed precisely because of such an exception.

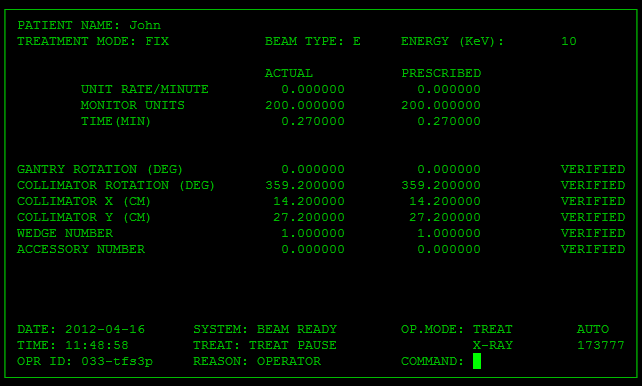

Therac-25 graphical interface

The device could operate in two modes depending on the treatment goal and method:

- mode 1 for generating a low-energy electron beam

- mode 2 for generating a high-energy electron beam — requiring additional safeguards to avoid damaging healthy tissue

In Therac-25, a technician manually entered commands into the device terminal to switch between radiotherapy modes. The program queued these commands in a shared memory buffer and then sent instructions to the hardware. If a quick-fingered technician entered commands very rapidly, a classic race condition occurred. Two concurrent processes (keyboard input handling and treatment mode configuration) used the same memory resource.

This could lead to a situation where the display data was updated but the mode itself did not change. A technician could therefore see in the GUI that the machine was set to generate low-energy electrons, while the backend configuration had not actually changed. As a result, some patients were exposed to high-energy electron beams without any protection of healthy tissue, because — as mentioned earlier — Therac-25 had no additional hardware safety interlocks. Incidentally, introducing mutual exclusion mechanisms (mutexes) could have prevented simultaneous writes or reads of critical settings in the device.

Reproduction of the Therac-25 software bug (click the image to play the GIF)

Moreover, the Therac-25 manual was terribly written. Some error messages displayed only the word “MALFUNCTION”, followed by a number between 1 and 64. The manual explained the codes very briefly and did not indicate which ones signaled a life-threatening situation for the patient. As a result, technicians learned to ignore vague warning signals. This tragic story is very well explained in a video on the Low Level channel.

If you think Therac-25 is ancient history, think again.

A very similar case occurred at the National Oncology Institute in Panama City in 2001. Twenty-eight patients received excessive radiation doses from the RTP/2 radiotherapy machine produced by the American company Multidata Systems International. In this case, the culprit was deploying a poorly designed fix to production.

Operators of the RTP/2 machine had to manually enter the number of so-called protective blocks — shields used in radiotherapy to reduce radiation exposure to healthy tissues and organs. Later investigations showed that the software allowed technicians to enter values that were incorrect and dangerous to patients’ lives.

Initially, the system allowed a maximum of four blocks to be entered into a single field. At the operators’ request, the manufacturer increased this limit to five. Unfortunately, the validation rules for input data then broke down. The system allowed entering the total number of protective blocks into one field as if they were a single indivisible module. This distorted the calculation of other parameters, including irradiation time. As a result, patients were exposed to radiation longer than they should have been, with insufficient protective shielding.

The lack of proper input validation mechanisms led to the deaths of 8 patients and caused serious health complications in another 20. The technicians operating the machine were accused of murder by the victims’ families. I was unable to find information about what ultimately happened to them.

As it is in heaven

Today, most passenger aircraft operate using the fly-by-wire system. In short, this term describes a method of controlling an aircraft that somewhat resembles a flight simulator game. The pilot moves the control stick, but it is not mechanically connected to the aircraft’s control surfaces. The pilot’s movements are transmitted to the onboard computer, where the real magic happens: signals from the stick are processed, modified to best match the flight parameters, and then transmitted via cables to actuators such as the ailerons. Reliable software in fly-by-wire systems is fundamental to passenger safety.